One-minute Dungeon is an interactive special effects showcase, my first proper Unity (4.5) project. I made it to learn Unity and practice C#.

One Minute Dungeon

Modeling was done in The Foundry’s Modo and exported in FBX format. The files contained an empty animation track which I didn’t want imported to Unity so I created an asset post-processor script to fix that and to skip automatic material creation as well.

To simplify asset authoring I decided against using normal maps and created denser models instead. Proper shading was achieved by adding extra edge loops, bevels and the like.

The higher vertex count also came in handy when I used vertex colors to add details to the static geometry: Most background meshes use a shader which mixes 2 opaque and 2 “overlay” blended layers based on vertex colors. They usually represent dirt, moss, damage and brightness adjustment, in this order.

The diffuse map is accompanied by auxiliary textures, storing the dirt and moss grayscale images in the R and G channels. Another RGB image, a “mask map”, is combined with vertex colors to control blending over the base diffuse texture.

The first two layers drive three-color gradients, defined by shader parameters, similar to how Photoshop’s “Gradient map” works. Drag the slider to see its effect.

The damage layer’s blend mode is similar to “overlay”: Dark colors darken, light color brighten the surface, 50% gray doesn’t change anything. The last layer, brightness adjustment, does the same thing but without a bitmap involved: vertex alpha is directly used to adjust pixel brightness.

During asset authoring I wanted to get an idea how things are going to look without actually exporting to Unity over and over. Unfortunately Modo 501’s realtime viewport is not very capable and the offline render engine can’t use vertex colors which made previewing the work difficult. Another problem is that vertex colors can’t be painted per channel. To work around these limitations I created a LUA script which creates 4 weight maps and combines them back to RGBA vertex colors before export. Weight maps can be used in the shader tree so Modo’s in-viewport renderer, RayGL, then provided a quick if rough estimation of the final surface. When things seemed fine then I proceeded with the export and checked the mesh in Unity with the final shaders.

Every custom shader was developed in UDK and ported to Unity manually: the Unreal engine’s material editing workflow is superior when it comes to speed, flexibility, experimentation or debugging. (The excellent Shader Forge was in a very early alpha stage back then so I didn’t use it.)

These were the steps of shader creation: First created roughly the visual I was looking for or experimented with different effects until I found something I liked. At that stage the node network was messy and unoptimized as iteration time took precedence. When I was happy with the result I organized the shader setup and simplified it as much as I could. Sometimes more subtle features got cut entirely as I tried to balance resource cost, visual flair and usability.

Then came the part were I recreated the logic in a text file for ShaderLab. While basic operations were easy to port there are many differences between the two engines which made porting trickier. 3D and UV coordinate systems, Unreal’s custom implementation of trigonometric functions and common shader input data formats are the most notable differences. Occasionally I spent a day on a single Unreal node until I found a usable ShaderLab implementation. However now I have a series of copy-paste-ready building blocks which makes porting faster and less frustrating.

After the shaders were compiled and materials were set up, the deferred lighting render path was used to show them. This made soft particles possible and allowed me to use many realtime lights to tweak the lightmap as Beast tends to be headstrong at places. These hack lights have relatively small radius and no shadow casting to keep their cost down.

After slightly modifying the Internal-PrePassLighting.shader file I changed the light’s behavior so the alpha of the light color now controls diffuse contribution: at 0 the light only produces specular highlights and has no effect on the diffuse term. This feature can be useful when fine tuning visuals.

Vertex alpha is adjusting brightness which helps to emphasize geometric details and can add some extra variation.

The longer blades of grass are made up of tessellated planes. (Looking at them now, I should’ve bent them more.)

They use an alpha blended shader where polygon sorting within a mesh is a problem. Fortunately the constrained camera position allowed me to achieve proper order manually by cutting and pasting the planes during modeling.

The tessellation was needed for the vertex shader which mimics waving in the breeze. The displacement is basically a sine based, pseudo-random noise taking vertex positions and normals into account. Three properties can be controlled through vertex colors:

– Amplitude. Outer regions like the tip of the blades tend to swing wilder than the stems which shouldn’t move at all.

– Scale, controls how much the noise changes at a given distance. This value is low on vertices of wide leaves for example so different parts don’t move far from each other. Independent structures like grass blades use a high value so even close vertices can go on very different ways.

– Speed affects how fast the noise moves through the world. Low values are for hard to reach, covered vertices, not directly affected by wind.

These features were meant to be used more extensively in a garden area which was later cut.

The grass texture (just like the business end of the broom later on) was rendered using Modo’s fur feature. A blurred version was blended with the original for additional thickness which really helps the look at lower MIP levels.

The diffuse and auxiliary textures for the hive were baked in Modo from a high poly mesh containing high resolution image maps and a few procedural layers.

The second image contains emission coming deep from the hive (red channel) and near the entrance (green channel) which lights up that area when the fireflies move through.

For the light of the critters shining through the thin walls first I generated a texture in FilterForge and made it pan over the surface:

The red channel’s cells provided the basis for the random pulsation of the green channel’s radial gradients. A few simple math operations made the cells fade in and out at given speed, spending slightly more time at the dark end. The result is then mixed with a similar branch having different panning direction, speed and tiling.

A single float material parameter controls everything in the shader: when it moves from 0 to 1 it increases the overall hive glow, fades in the entrance glow which peaks around 0.5. The light spots are made visible and their corresponding UV coordinates are scaled up with the entrance as center.

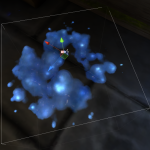

The fireflies have a shader which displays a three frame animation encoded in the RGB channels of the input image. The UV coordinates are rotated back and forth to produce the subtle swing.

The material is used on a Shuriken particle system which only acts a sprite renderer and data pool, the logic behind particle spawn and movement is implemented in a custom component. I used Visual iTween path editor to create several movement paths for the fireflies. Then I randomly assign a spline to each particle and move the particles along with configurable speed, delay, deviation, wobble amplitude and frequency.

The other Shuriken emitter used in the scene is the dripping glow-honey. Its shader uses a texture that stores 2D normal data (RG) and a static fresnel term (B). The first two channels distort the UVs of a noise texture to make it look like the droplet refracts light coming from the background. After some brightness and contrast adjustments the result drives a three-color gradient to define the final colors.

Due to limitations of Shuriken the stretching of the honey drops is done in the vertex shader. Stretch amount, brightness variations and random time offset are controlled through vertex colors.

(At this point I was really unhappy with the in-built particle system so I started developing my own, called Amps, which was used for the rest of the effects.)

The dripping honey ends up in the barrel. The surface of the honey there has a simple shader: a noise image is ran through a sine function and the result is then used to distort the UV coordinates of a texture sampler working with the same texture.

The puddles of honey on the floor are projected by the Decal System plugin. I created custom shaders for them which support texture atlases: only the specified area of the whole image will be displayed. Color and contrast tweaks are also implemented.

The texture for the splats was generated by the Liquid Drops filter for Filter Forge.

As the broom disappears the tornado shows up. Its shader relies on multiple layers of a smoke texture, each stretched, sheared and panned differently. The mesh has two shells, an inner and outer one. The latter is marked using vertex colors in order to add a much less opaque set of puffs, increasing the depth of the effect.

The reflection vector’s X component was used to create a “vertical fresnel” as base opacity. This was then mixed with the animated smoke and vertex alpha.

The subtle dance of the mesh is produced by sine functions driving other sines. The source data is time plus, to make the bottom end move differently, V coordinates. The latter was used again later in this part to decrease displacement amplitude toward the top.

The final component of the effect is the dust trail, created by an Amps emitter. In it the governing rules for the particles are defined, like the following examples:

– Base spawn rate is multiplied by the scale of the tornado GameObject. This way I can modify the tornado’s animation however I want, the dust particles will automatically show up when needed.

– Remember where the tornado was when the particle was born and keep checking where it is now. Put the particle at a random position between the two. This makes the dust puffs more or less reluctantly follow the whirlwind.

– Rotate the sprites around their pivot then offset the pivot over time. The quads start revolving around their center but end up orbiting the base of the tornado.

– A particle gets closer to death as it approaches a randomly picked age, dies on reaching it. This “closeness to death” value (0..1) drives most curve parameters (fading in-out, growing, etc) instead of absolute times. This means that I only need to tweak the death condition, the range of possible ages, and everything else adapts instantly. At some point there was another condition beside age: particles wandering too far were also killed (kill by distance to tornado). It was later removed but it was a cheap experiment as it didn’t involve the adjustment of other parts in the particle system.

The bubbles in the cauldron are also created by an Amps emitter. The more important rules are these:

– Kill after given age but bigger bubbles live longer.

– Move a particle at a random direction but move big ones slower. The rest of the setup deals with starting position, scale, growth and shader animation through vertex color.

This emitter has a child particle system for the steam puffs released from the bubbles. Every child particle picks one from the parent’s particle pool and monitors its properties.

– Spawn rate is the parent’s particle count multiplied by a constant.

– Start a timer when the associated parent particle is near death. When a child particle is born it’s placed at its related parent particle. However it stays inert (zero scale, no animation) until the bubble almost popped. Then a timer starts which drives all the expected particle animations (growth, fading in, etc) so the puff rises as the bubble bursts.

Recent developments to Amps now would allow a simpler way to do this: parent particles creating child emitter controlling events. A bubble could request a steam puff and send along a data package too, to be used by the child particle: “I want a particle at my position with a starting size of myself.”

The soup is based on a normal map/height map pair generated by a custom FilterForge filter.

Multiple layers of these rotating textures are blended based on more transformed textures. The lighting is hardwired with two distant light of constant direction and color, just like on the bubbles.

The fire and steam shaders are pretty much straight ports of the UDK materials I made for my previous project: Video / Behind the scenes.

Decals were used on the charred firewood: they project a material with multiplicative blend mode. The input texture is adjusted so the result behaves like “overlay” with control over highlight color, brightness and saturation.

The glassware with fluids utilize a shader which allows control over liquid amount and orientation regardless of mesh shape or rotation. The first step is gathering object relative position for every pixel. In the UDK version I use “Actor position minus pixel world position” but that solution doesn’t work in Unity with batching enabled because then multiple GOs can get combined into a single one. So I made a LUA script in Modo which stored vertex positions as vertex colors (normalized to bounding box).

This data, combined with a vector parameter defining the surface of the liquid, produces a mask to blend between glass and fluid. (Vertex alpha acts as another mask to constrain the effect to the inner parts.)

So now it’s known where to show glass and where to put the liquid. The glass surface is a simple deal, diffuse and opacity textures, also affecting specularity and reflectivity. The fluid is more complex so first let’s see how the front faces are rendered in this double sided material.

Any part of the mesh which faces toward the viewer shows the liquid as totally opaque so the backsides, used later on, are covered up. To provide the illusion of transparency a cube map is used to fake a blurred and distorted background. The camera vector (tangent space view direction) is inverted just before it’s fed to the cube map samplers so the result looks like refraction instead of reflection. There are two samplers tweaked differently then added together to make a volumetric-ish effect.

A cube map reflection, created the usual way, is added to both glass and fluid areas. What is not so usual is the way normals are presented to the reflection and refraction branches of the shader: on front faces the tangent space up vector is used (plain geometry normals) but the back faces get a single constant vector instead, the Fluid Surface Vector material parameter. That makes it look like that the shape of the fluid has a flat cap, although we are just looking at pixels where the topology has no affect on reflection and refraction as they face a single, given direction.

In the scene the Fluid Surface Vector material parameter is animated by a custom script which monitors an invisible rigid body box attached to the also physics driven bottle: a push of the bottle makes the box swing, the material animator script copies its orientation to the material parameter.

The card shader takes the input image, stretches and offsets it so only one card face, at a given index, is visible. Another sampler does pretty much the same but it also flips the image. The two results are blended by a mask so the flipped version shows up at the bottom half.

The opacity is procedurally generated from the UV coordinates and features rounded corners with variable corner radius.

This shader is used in an Amps emitter to build up the house of cards. The emitter produces two sided quads where the front side gets a random card face index via the red vertex color channel while the back side is always index 0.

Particle properties are driven by per particle timers which make them behave like a LIFO stack rather than the usual FIFO: the later a card left the deck the sooner it will return.

During its lifetime a card moves from the top of the deck to a position defined by an empty Game Object. From there it flies off to its final place in the house of cards while also rotating to an appropriate angle. Location and orientation for each card is defined by a mesh which provides vertex and polygon data to be used freely in the particle system (polygon center and normal in this case).

The mesh sampler module runs in a mode where a particle is associated with a target polygon by index: the first card goes to the first polygon, second card to the second polygon and so on. Manually editing polygon order in Modo allowed me to control how the structure is being built.

After spending a given amount of time standing still the cards fly off touching another empty GO as they return to the deck.